Defining the Ethical Frontier in Artificial Intelligence

In 2026, “AI ethics” isn’t just about good intentions or writing mission statements it’s about drawing a hard line where convenience, power, and morality collide. The term now covers everything from how data is sourced, to the fairness of outcomes, to what happens when algorithms cause real harm. It’s less about theoretical philosophy and more about operational decisions: when should you build a model, and when should you walk away from one?

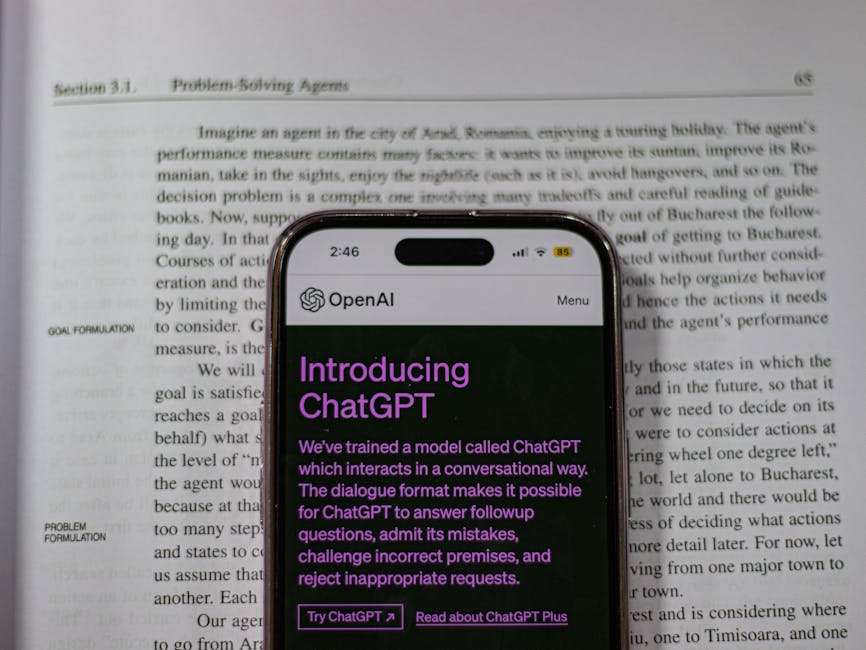

The breakneck speed of AI innovation hasn’t let up. If anything, it’s accelerated. Tools that were bleeding edge in 2023 are laughably outdated now. Tech is developing faster than any regulator can type a draft bill. Most countries are stuck playing whack a mole patching problems after damage is already done. Meanwhile, developers are pushed to ship fast, optimize harder, and grow user bases, often without clear ethical guidance.

This creates friction. On one side, engineers and founders chasing what’s technically possible. On the other, ethicists, watchdogs, and users asking: Just because you can, should you? That’s the heart of it. AI ethics in 2026 means knowing where to draw the line and understanding that crossing it has consequences, legal or not.

Bias and Fairness in Algorithmic Decision Making

When Machines Mirror Our Flaws

Artificial intelligence may be designed to be objective, but in practice, it often mirrors the biases embedded in its data and creators. These biases crop up quietly but can have real world consequences especially in high stakes systems like law enforcement, hiring, and healthcare.

AI systems learn patterns from historical data if that data reflects past disparities, so will the algorithm.

Forms of bias can stem from training sets, labeling practices, architecture choice, or even user behavior.

The question isn’t just whether AI is biased, but how those biases manifest and who they impact most.

Case Studies: Where Bias Becomes Impact

1. Facial Recognition:

Multiple studies have shown facial recognition algorithms perform worse on women and people of color.

In some cases, misidentification has led to false arrests, raising serious civil rights concerns.

2. Hiring Software:

Automated screening tools have penalized applicants based on gendered keywords or nontraditional education paths.

Some systems favored resumes resembling past (often non diverse) hires, unintentionally reinforcing exclusion.

3. Predictive Policing:

These tools use historical crime data which is itself biased due to over policing in certain areas to suggest where future crimes might occur.

This can result in reinforcing surveillance in communities already over monitored, exacerbating systemic inequalities.

Pulling Back the Curtain

To address bias, the tech industry is increasingly investing in transparency and third party audits. These efforts are still uneven, but notable initiatives include:

Bias audits: Independent reviews of algorithmic behavior and outcomes

Explainability tools: Features that show how decisions are made or weighted

Diversity in AI development teams: A push to reduce blind spots at the design level

Moving forward, ethical AI requires constant scrutiny, inclusive design processes, and a recognition that fairness is not a default setting it’s a choice made at every step of technological development.

Data Privacy and Consent in the Age of AI

Personal data doesn’t just sit on your phone or browser anymore it moves. Quietly, constantly. From app permissions to retail purchases, data is pulled into pipelines that feed training models, optimize ad placements, or score credit risks. The source could be a smart speaker in your kitchen or a scroll through a fitness app. The destination? Often unclear. Sometimes anonymized. Sometimes not.

AI thrives on volume and behavioral nuance. To get that, algorithms map patterns in user behavior when you click, what you pause on, who you interact with. The problem: most users don’t know this is happening in real time, or how deep the profiling goes. Informed consent has become less about saying “yes” and more about trying to decode vague terms of service before tapping “accept.”

What’s legal often jars with what feels ethical. Europe’s GDPR gives users real rights, while other regions treat data like currency with no strings attached. This uneven field means AI systems may be built on lax data practices in one country and deployed globally. The result? A tech world where privacy expectations crash up against commercial ambition. The question isn’t just who owns the data but who deserves to use it, and why.

Accountability: Who’s Responsible When AI Goes Wrong?

As artificial intelligence becomes more deeply embedded in critical systems from healthcare diagnostics to financial forecasting the question of accountability is no longer theoretical. When AI malfunctions or causes harm, who should be held responsible? Developers, deployers, or the algorithm itself?

The Legal Gray Zone

The current legal framework struggles to assign clear responsibility in AI related incidents. There are often multiple parties involved:

Developers who design and train the models

Deployers (such as companies or institutions) who implement AI solutions in real world scenarios

The algorithm, which may evolve through machine learning in ways even its creators didn’t fully anticipate

This complexity blurs liability. If AI software makes an incorrect diagnosis or enacts a biased decision, who’s at fault the engineer, the company using the tool, or the tool itself?

Cases That Are Redefining Standards

Real world lawsuits are beginning to define new norms in AI accountability. A few notable examples:

Algorithmic hiring tools have come under legal scrutiny after rejecting qualified candidates based on demographic traits.

Facial recognition misuse, especially in law enforcement settings, has triggered civil rights lawsuits challenging the use of such technologies.

Financial decision engines have led to class action suits accusing tech firms of discriminatory lending or insurance practices.

These cases are shaping how courts view the chain of liability in AI incidents, setting precedents for future governance.

The Call for Ethical AI Laws

Legal developments are lagging behind technological change. That’s why growing voices within academia, civil society, and even industry are calling for:

Clear liability frameworks that define who’s responsible when automated systems fail

Minimum ethical development standards, particularly in high stakes AI applications

Oversight authorities to enforce compliance and investigate harm

Audit trails and explainability mechanisms as part of ethical deployment requirements

The momentum for enforceable governance is growing and it’s not just about regulation. It’s about maintaining public trust in an era when artificial intelligence makes decisions more quickly (and sometimes with more permanence) than any human ever could.

2026’s Landscape of AI Laws: Patchwork or Progress?

AI regulation in 2026 looks less like a unified legal framework and more like a scattered puzzle. The European Union is pressing ahead with the AI Act an ambitious, binding set of rules that classifies AI systems by risk level. High risk tools, like facial recognition in public spaces, face strict oversight. Transparency, accountability, and human oversight aren’t just encouraged they’re legally required. It’s legislation with real teeth.

The U.S., on the other hand, is still banking on a voluntary approach. Industry led frameworks and federal guidelines dominate, with regulatory bodies offering soft enforcement and relying on corporate cooperation. Some states are stepping in with their own rules, but patchiness defines the national picture.

This divide raises a bigger question: Can AI governance go global? Some think it might follow the playbook of climate agreements incremental, consensus based, and far from perfect, but better than chaos. A treaty style model could allow countries to bring their own standards to the table while agreeing on a few universal red lines: no black box algorithms in critical systems, no unauthorized biometric surveillance, no AI without accountability.

Whether this turns into real global cooperation or just diplomatic theater remains to be seen. But one thing is clear 2026 isn’t the year we figured out AI law. It’s the year we realized we can’t afford to wait much longer.

The Corporate Ethics Arms Race

Tech companies aren’t just selling products anymore they’re selling trust. With AI deployment moving faster than most laws can catch it, companies are scrambling to position themselves as morally responsible players in a very high stakes game. The buzzword of the moment? “Ethically aligned.” It sounds good. It prints well. And it’s quickly becoming a badge of social license, especially for firms hunting government contracts or user loyalty.

But here’s the rub: it’s easy to fake. A new breed of PR camouflage is emerging call it AI washing. Think mission statements filled with ethical promises no one checks. Think glossy reports and advisory boards that sound impressive but carry no bite. Spotting the difference takes a sharp eye. Vague commitments, non disclosure of audit results, and a pattern of ethics statements that never mention specifics these are red flags.

Then there are the internal ethics boards. In theory, these should be the conscience of an AI operation. In reality, it’s a mixed bag. Some are staffed with independent experts who aren’t afraid to challenge leadership. Others are packed with company insiders or industry allies who don’t make waves. When ethics boards report directly to marketing or legal, their power shrinks fast.

Bottom line: the arms race to look ethical is on. But users, regulators, and employees need to ask real questions who enforces the standards, who audits the outcomes, and who’s actually in the room when decisions get made.

Lessons from Parallel Tech Crises

History has a quiet way of giving warnings. Take the global semiconductor shortage. It wasn’t just a supply chain hiccup it exposed a system built for speed, not resilience or fairness. Entire industries stalled, small players were shoved aside, and end users were left in the dark. The worst part? Very few saw it coming, and fewer took responsibility.

These cracks weren’t just logistical; they ran ethical. Decisions made behind closed doors, lack of communication with stakeholders, and zero transparency about long term impacts. Sound familiar? AI is walking a similar edge right now. Rapid growth, poorly understood systems, and questionable oversight. Different tech, same blind spots.

What still matters and always has is clear: transparency, accountability, and prioritizing the user. These aren’t buzzwords. They’re fundamentals that don’t age. In the middle of hype cycles and innovation sprints, they’re the compass. The past didn’t hand us a playbook, but it did give us patterns. Ignore them, and we’ll repeat the same avoidable mistakes just this time at scale.

Staying Human in the Loop

Machines are fast. Machines don’t get tired. But they also don’t understand nuance or consequence. That’s why the future of AI needs people in the loop. Hybrid decision making isn’t just a safety net; it’s a structure. One where algorithms flag, suggest, and support but humans decide. Whether it’s moderating content, approving medical recommendations, or guiding autonomous systems, keeping a human hand on the wheel adds common sense that coding can’t cover yet.

At the same time, the public can’t just be passive users. Digital literacy is no longer optional it’s survival. People need to understand what algorithms are doing in the background, what data they’re collecting, and how that data shapes outcomes. If we’re going to live with AI, we need to stop trusting it blindly. Think before you click, scroll, or swipe.

And as AI tools become more embedded, creators and coders have a responsibility to design for ethics from the ground up. That means building in defaults that nudge users toward better choices, labeling AI generated content clearly, and stress testing systems not just for performance, but for unintended consequences. Ethical reflexes don’t come from regulation alone they grow inside teams who ask hard questions early, and often.

In a world ruled by algorithms, keeping the human presence sharp and deliberate is the only way the tech stays worth trusting.